It’s much simpler than Airflow, but that’s a benefit to most. That means you can execute workflows on any server and monitor them from Prefect’s cloud portal.

However, there is a paid cloud version too, which is one of its major differentials from Airflow. Prefect is fresh and modern, but is also an open-source project. Airflow was the only real option available for orchestration at scale, but fitting very complex projects into Airflow can be tricky, particularly in the case of ML projects. Issues with AirflowĪirflow was designed by a huge enterprise – Airbnb – and is therefore angled more towards large and enterprise deployments.

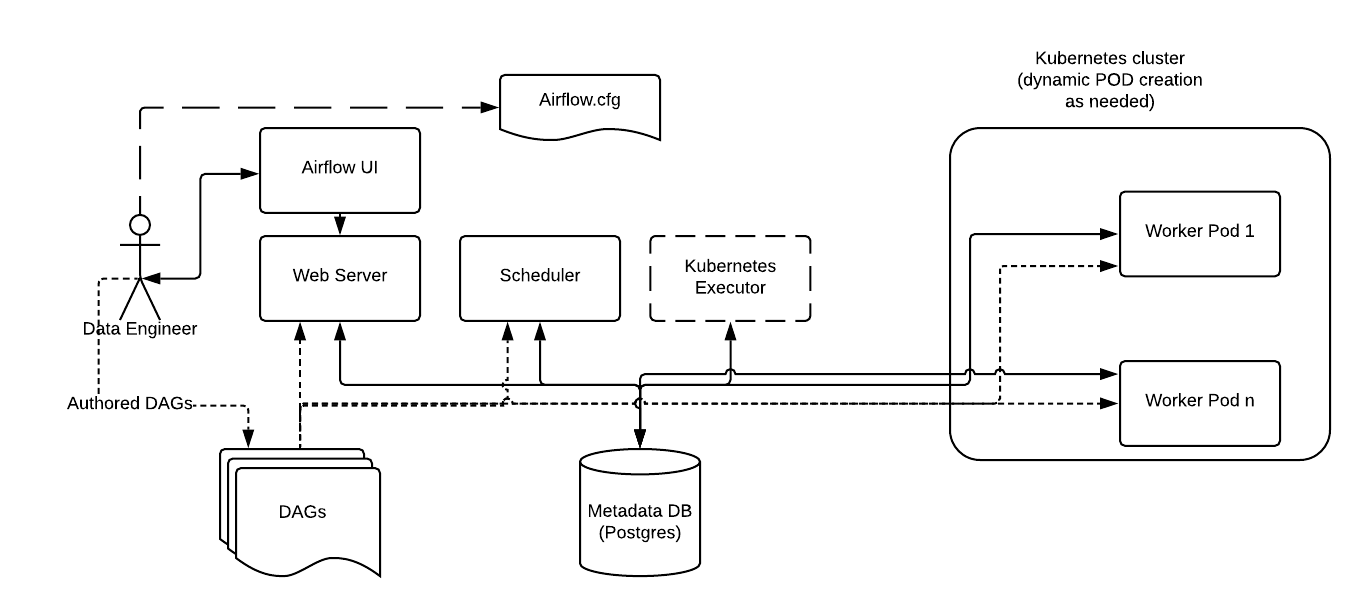

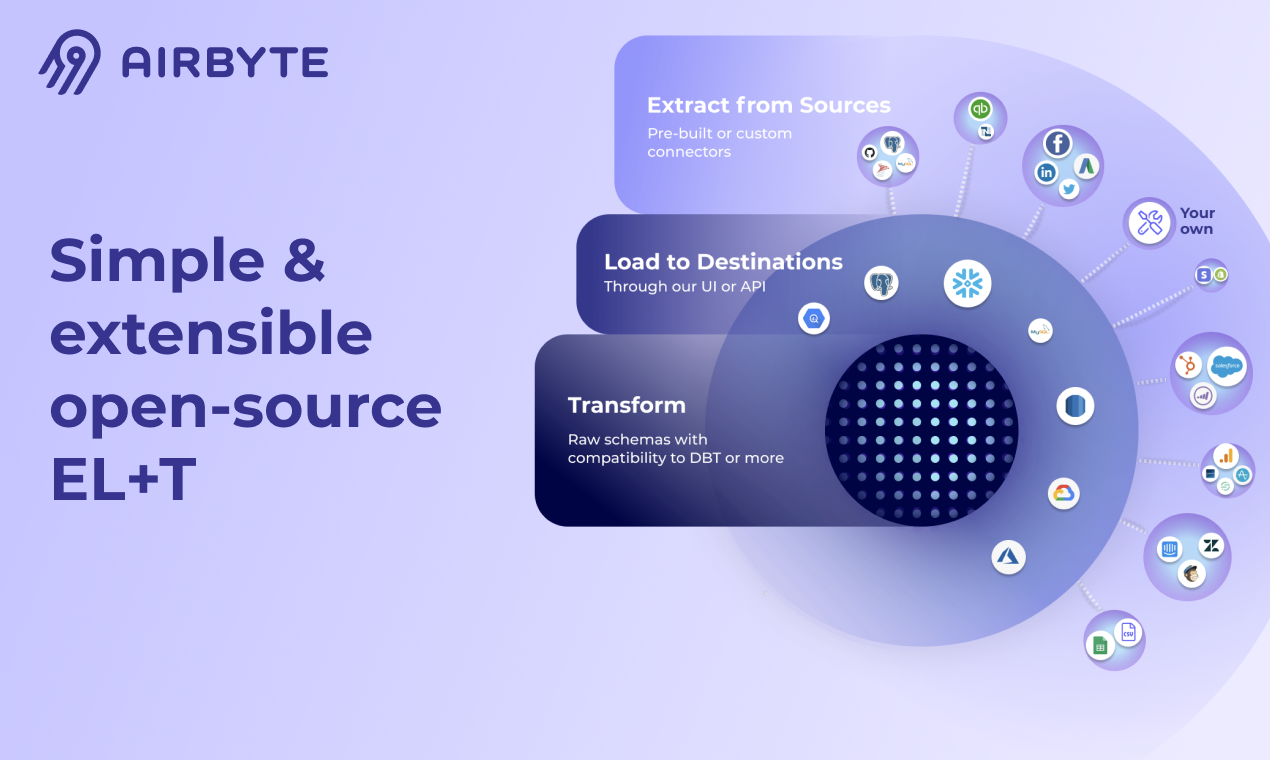

In addition, Airflow connects to cloud services like AWS and is backed by a huge community. Airflow is used by at least 10,000 large organisations, including Disney and Zoom. Written in Python, Airflow is popular amongst developers and is designed to be distributed, flexible and scalable while handling complex business logic. Here, we’ll be comparing Airflow and Prefect.Īn Apache project, Airflow has become the go-to workflow orchestration tool that is well-suited to medium to large-scale businesses and projects. MLFlow: Orchestration specifically for ML projects.KubeFlow: For Kubernetes users that want to define tasks with Python.Prefect: Prefect has become a key competitor to Airflow, but provides a cloud offering with hybrid architecture.Dagster: Dagster is more similar to Prefect than Airflow, working via graphs of metadata-rich, functions called ops, connected by gradually typed dependencies.It’s simpler for Python users than Airflow overall. Luigi: Luigi is a Python package for building data orchestration and workflows.Airflow is written in Python and is probably the go-to workflow orchestration tool with its easy-to-use UI. Apache Airflow: Originally developed by Airbnb, Airflow was donated to the Apache Software Foundation project in early 2019.This is a relatively new category of tools, but there are already quite a few options, including: The end goal is to create a dependable, repeatable, centralised workflow for orchestrating data pipelines and MLOps-related tasks. DevOps tasks, like submitting Spark jobs.Extracting batch data from multiple sources.Managing pipelines that change at relatively slow, even intervals.Monitoring data flow between APIs, warehouses, etc.Workflow orchestration tools connect to a wide range of data sources, e.g. Workflow orchestration tools enable data engineers to define pipelines as DAGs, including dependencies, then enabling them to execute tasks in order.Īdditionally, workflow orchestration tools create progress reports and notifications that enable team members to monitor what’s going on.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed